I don’t consider myself very technical. I’ve never taken a computer science course and don’t know python. I’ve learned some things like Linux, the command line, docker and networking/pfSense because I value my privacy. My point is that anyone can do this, even if you aren’t technical.

I tried both LM Studio and Ollama. I prefer Ollama. Then you download models and use them to have your own private, personal GPT. I access it both on my local machine through the command line but I also installed Open WebUI in a docker container so I can access it on any device on my local network (I don’t expose services to the internet).

Having a private ai/gpt is pretty cool. You can download and test new models. And it is private. Yes, there are ethical concerns about how the model got the training. I’m not minimizing those concerns. But if you want your own AI/GPT assistant, give it a try. I set it up in a couple of hours, and as I said… I’m not even that technical.

“learned some things like Linux, command line, docker, and networking/pfsense” “I don’t consider myself technical”

Don’t sell yourself short, I work in IT and have colleagues on our helpdesk who would struggle endlessly with those concepts.

I hereby dub you a tech person, like it or not, those skills can and do pay the bills.

Now that you’ve dubbed OP a tech person…

Hey OP, can you help me fix my printer? It’s only printing “RED RUM RED RUM” for some reason.

Have you tried giving it red rum?

Oh, and make sure you hold it out with the insides of your arms exposed, it’ll feel less threatening that way.

It is done.

This gave me confidence as well, thank you 😆

This made me smile. Thank you. The grass is always greener and I sometimes daydream of working in IT instead of healthcare. Maybe someday.

Nah dont.

Healthcare is pretty rough, I’d be willing to bet that the grass actually is greener in this case.

I am actually considering switching to healthcare (been a professional programmer)

I’ve had a burnout: I wish it was due caring for people in need instead of a stupid deadline.

Besides, you can always do IT as a hobby/for free. Harder with healthcare, except maybe volunteering

You’ll be saving lives, yeah, but between dealing with entitled assholes that won’t follow directions and then yell at you because they didn’t.

It’s maybe easy to burn out in any career. Society has deprioritized individual fulfillment for most of us because it harms the nesting levels of billionaires’ yachts.

Thank you for this. I consider myself technical and those words felt like a punch in the gut.

I’m sorry if I offended. I can’t code or understand existing code and have always felt that technical people code. I guess I should expand my definition. Again, sorry that my words felt like a punch in the gut… wasn’t my intention at all.

It depends heavily on what you do and what you’re comparing yourself against. I’ve been making a living with IT for nearly 20 years and I still don’t consider myself to be an expert on anything, but it’s a really wide field and what I’ve learned that the things I consider ‘easy’ or ‘simple’ (mostly with linux servers) are surprisingly difficult for people who’d (for example) wipe the floor with me if we competed on planning and setting up an server infrastructure or build enterprise networks.

And of course I’ve also met the other end of spectrum. People who claim to be ‘experts’ or ‘senior techs’ at something are so incompetent on their tasks or their field of knowledge is so ridiculously narrow that I wouldn’t trust them with anything above first tier helpdesk if even that. And the sad part is that those ‘experts’ often make way more money than me because they happened to score a job on some big IT company and their hours are billed accordingly.

And then there’s the whole other can of worms on a forums like this where ‘technical people’ range from someone who can install a operating system by following instructions to the guys who write assembly code to some obscure old hardware just for the fun of it.

I am going to be buying a monster high end machine and I want to do all the AI stuff on it.

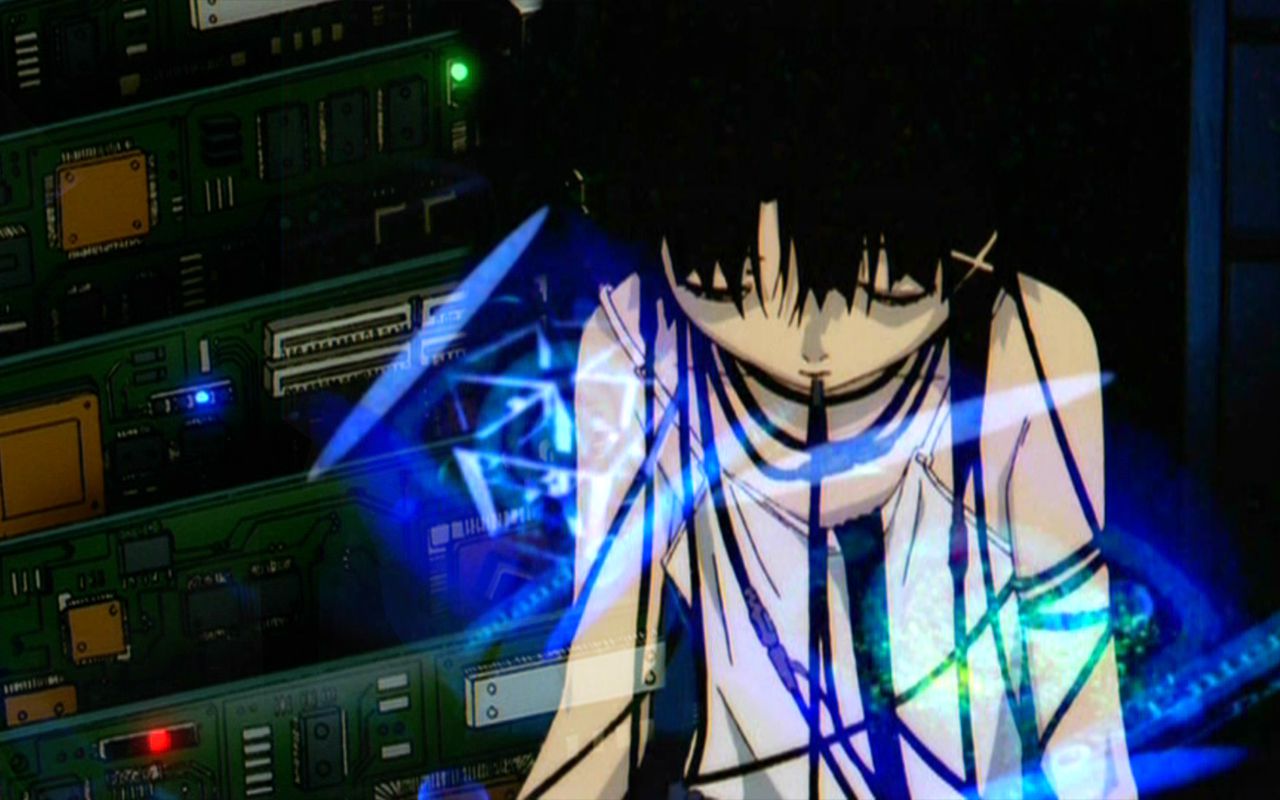

people need to take a step back and realize we have the capability to trap quasi-omnipotent quasi-demons in our personal computers

yeah they lie a lot and rarely do what you want them to, but that’s just what demons do

And it’s all powered by some dark crystals created with light magic that slowly poison the planet

that’s some arcane bullshit

Uncensored models are so much better, too. chatGPT is like one of those plastic children’s toy hammers vs real models are titanium hammers

Acronyms, initialisms, abbreviations, contractions, and other phrases which expand to something larger, that I’ve seen in this thread:

Fewer Letters More Letters NVR Network Video Recorder (generally for CCTV) PSU Power Supply Unit VPN Virtual Private Network

3 acronyms in this thread; the most compressed thread commented on today has 11 acronyms.

[Thread #917 for this sub, first seen 12th Aug 2024, 07:15] [FAQ] [Full list] [Contact] [Source code]

It’s a much smaller scale but I use a Coral TPU with CodeProject AI to detect when people or animals are in front of my house. Works well with Blue Iris (NVR software for security cameras). I like it. That’s all the self-hosted AI I’ve got for now.

Is there a way to host an LLM in a docker container on my home server but still leverage the GPU on my main PC?

You would need to run the LLM on the system that has the GPU (your main PC). The front-end (typically a WebUI) could run in a docker container and make API calls to your LLM system. Unfortunately that requires the model to always be loaded in the VRAM on your main PC, severely reducing what you can do with that computer, GPU-wise.

No?

Isn’t this using a lot of computing power?

Not really, it uses some GPU power when it’s actively generating a response, but otherwise it just sits idle.

you hear that said about AI because companies are desperately throwing more and more resources at it to get 0.3% better results, and people are collectively running an insane amount of prompts all the time.

but on a personal level it’s not really any different from any other computations, people render videos all the time and no one complains about the resource usage from that, because companies aren’t trying to sell bloated video rendering services to gardening businesses.

Have you found much practical use for small models yet? I love the idea that even the 1.1B tinyllama model can run on my phone, but haven’t found much real world use for it yet. Llama3 8b feels better, but not much better for even emails as it’s a bit dumb

I use my phone all the time, but I just use a wireguard VPN to tunnel into my home container of Open WebUI. Then I can interact with my desktop machine using a NVIDIA gpu. I’m currently testing mistral-nemo. It’s pretty great but it gets a bit verbose sometimes.

I am also using open webui. Most LLMs are too verbose for me, so I created a model in open-webui with system prompt “Do not repeat the questions. Avoid giving lists as answers. Do not summarize the answer at the end. If asked a follow-up question, respond with only new information, do not repeat previously stated information.” and named it No Nonsense.

for some reason chatgpt responds well to “no yapping”

What kinds of specs do you need to run it well? I’ve got a laptop with a 3070.

You probably want 48gb of vram or more to run the good stuff. I recommend renting GPU time instead of using your own hardware, via AWS or other vendors - runpod.io is pretty good.

Kinda defeats the purpose of doing it private and local.

I wouldn’t trust any claims a 3rd party service makes with regards to being private.

IDK, looks like 48GB cloud pricing would be 0.35/hr => $255/month. Used 3090s go for $700. Two 3090s would give you 48GB of VRAM, and cost $1400 (I’m assuming you can do “model-parallel” will Llama; never tried running an LLM, but it should be possible and work well). So, the break-even point would be <6 months. Hmm, but if Severless works well, that could be pretty cheap. Would probably take a few minutes to process and load a ~48GB model every cold start though?

Assuming they already own a PC, if someone buys two 3090 for it they’ll probably also have to upgrade their PSU so that might be worth including in the budget. But it’s definitely a relatively low cost way to get more VRAM, there are people who run 3 or 4 RTX3090 too.